Animations created for one MetaHuman will run on other MetaHumans, enabling users to easily reuse a single performance across multiple Unreal Engine characters or projects. Once in Unreal Engine, users can animate the digital human asset using a range of performance capture tools-they can use Unreal Engine’s Live Link Face iOS app, and Epic is also currently working with vendors on providing support for ARKit, Faceware, JALI Inc., Speech Graphics, Dynamixyz, DI4D, Digital Domain, and Cubic Motion solutions-or keyframe it manually.

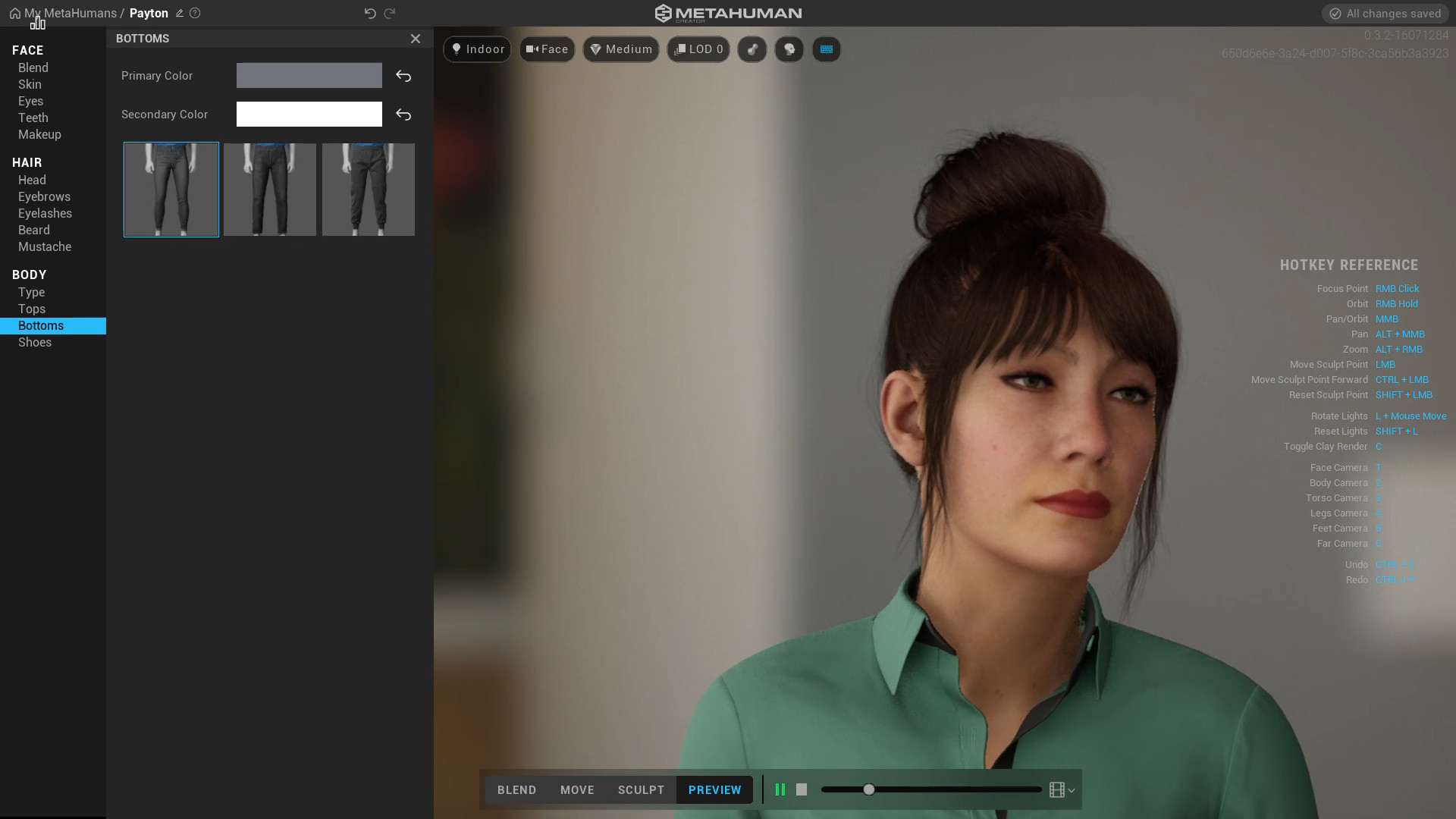

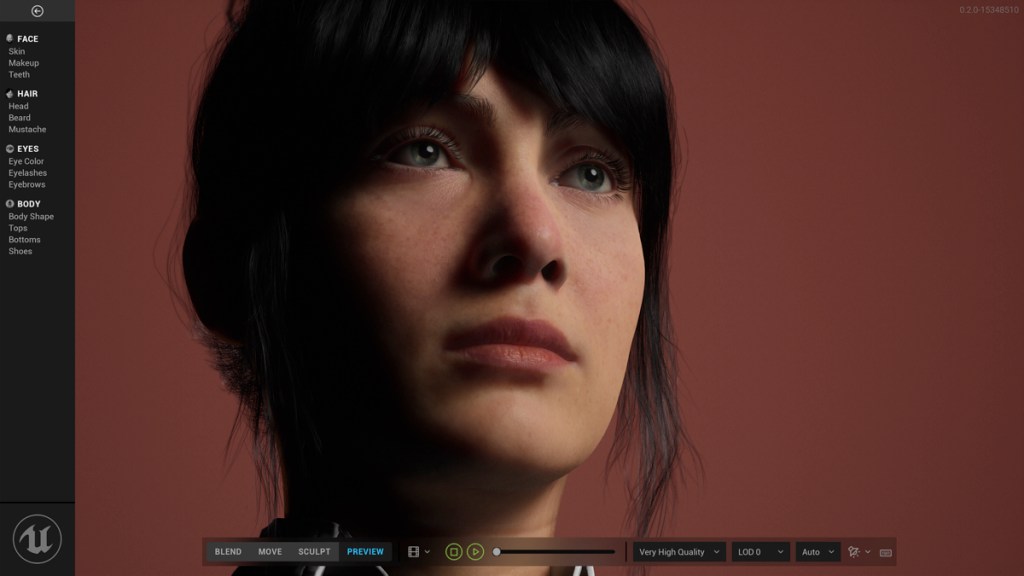

Users will also get the source data in the form of a Maya file, including meshes, skeleton, facial rig, animation controls, and materials. When ready, users can download the asset via Quixel Bridge, fully rigged and ready for animation and motion capture in Unreal Engine, complete with LODs. Users are able to apply a variety of hair styles that use Unreal Engine’s strand-based hair system, or hair cards for lower-end platforms.There’s also a set of example clothing to choose from, as well 18 differently proportioned body types. Users can choose a starting point by selecting a number of preset faces to contribute to their human from the range of samples available in the database. As adjustments are made, MetaHuman Creator blends between actual examples in the library in a plausible, data-constrained way.

MetaHuman Creator enables users to easily create new characters through intuitive workflows that let them sculpt and craft results as desired.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed